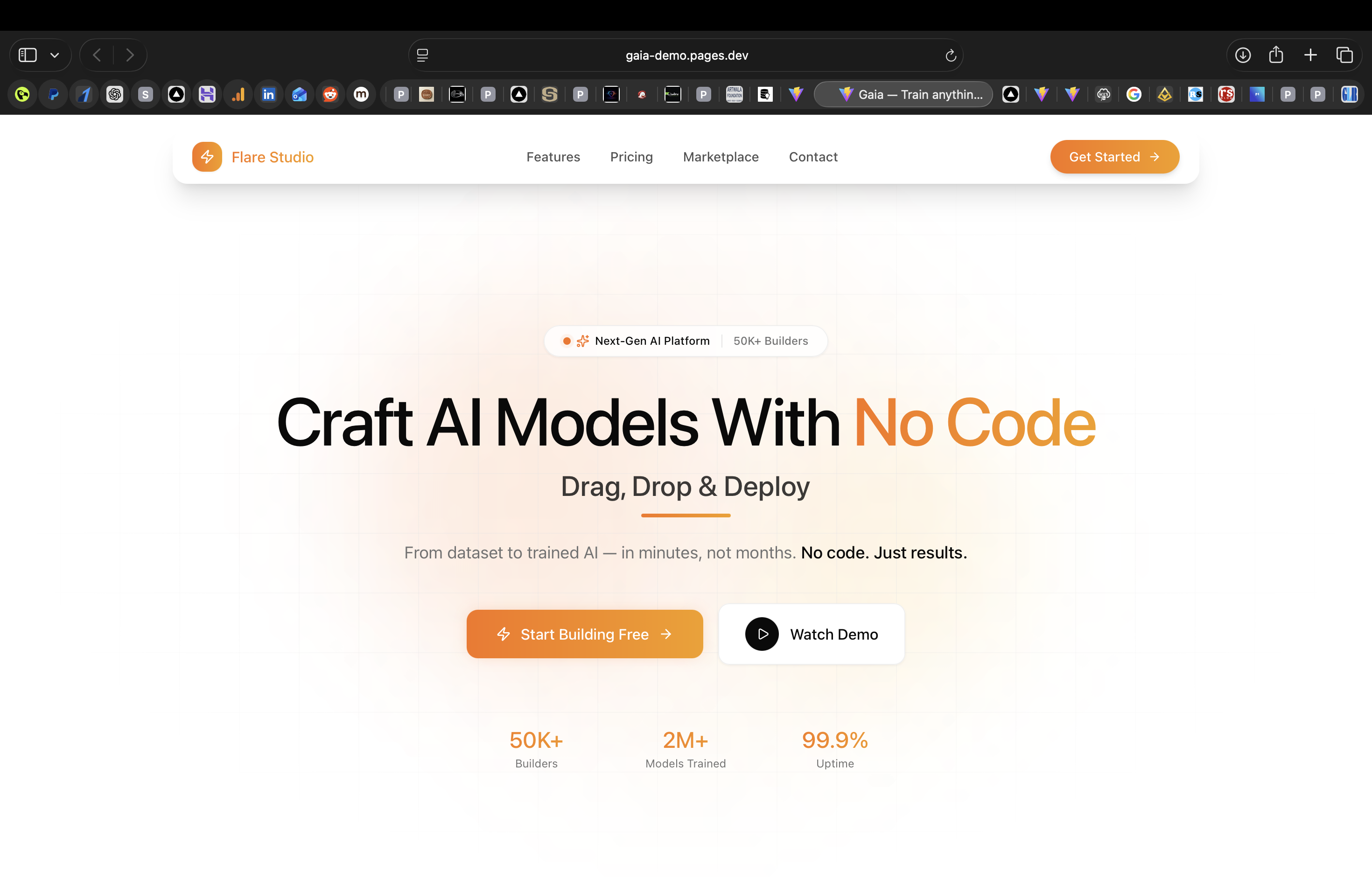

Gaia / Flare Studio

Drag, Drop & Deploy, No-Code AutoML Platform

50K+

Builders

2M+

Models trained

Up to 10×

Training speedup

14 Weeks

Timeline

Who this was for

Technical builders, product developers, and small data teams who have labelled datasets and a clear business problem but lack ML engineering bandwidth. Target profile: startup founders and solo developers who need a trained model REST API without managing cloud GPU infrastructure.

The Problem

Building, training, and deploying machine learning models requires deep expertise in data science, infrastructure, and MLOps. Most product builders have the data and the business problem, but not the ML engineering bandwidth to go from CSV to deployed REST API.

Existing AutoML tools (Google Vertex, AWS SageMaker) are enterprise-priced and require cloud-native expertise to operate. Builders needed a platform where they could upload a dataset, describe the task in plain English, and receive a trained, deployed model, with every architectural decision handled automatically.

Constraints

AutoML pipeline had to support classification, regression, NLP, and computer vision tasks from a single interface.

Entire stack had to run on Hostinger VPS, no cloud GPU budget; resource scheduling across Celery workers had to be efficient.

Bayesian optimisation had to be parallelisable across the Celery fleet without job contention or redundant training runs.

Model export format (ONNX, H5, PKL) had to be determined by target runtime, not chosen manually by the builder.

Full training lineage (hyperparameters, validation scores, data version) had to be persisted for every single trained model.

How Nexolve Built It

Gaia is a fully Dockerised agentic AutoML platform, a Next.js builder studio, FastAPI backend, Celery worker fleet, and Redis broker. An AI orchestrator handles data profiling, architecture search, training, and deployment end-to-end.

- 1

Docker Compose orchestration

Two Next.js portals (builder studio + admin), a FastAPI backend, Celery workers, Redis broker, and PostgreSQL, all containerised and deployed on Hostinger VPS with a single docker-compose up.

- 2

Automated data profiling

Dataset uploads trigger a Celery task that runs automated profiling, column type detection, missing value analysis, distribution statistics, and correlation matrices, before the user even describes their task.

- 3

Bayesian-optimised architecture search

The Celery worker fleet executes Bayesian hyperparameter optimisation in parallel, searching model architectures and configurations. Transfer learning from pre-trained checkpoints accelerates convergence up to 10×.

- 4

Multi-format model export

Trained models are exported as ONNX, H5, or PKL depending on the target runtime. Each export is versioned and stored with full training metadata, hyperparameters, validation scores, and data lineage.

- 5

Subscription billing with Razorpay

Google OAuth handles authentication. Razorpay manages subscription billing with webhook handlers that update compute entitlements, training GPU hours, concurrent jobs, and model storage limits, in real time.

Tech Stack

Outcomes

50K+ builders onboarded, from solo developers to small data teams.

2M+ models trained with automated architecture search and hyperparameter optimisation.

Up to 10× training speedup via transfer learning from pre-trained checkpoints.

Multi-format export (ONNX, H5, PKL) with full lineage, every model traceable to its training config.

Zero ML expertise required, natural language task descriptions drive the entire pipeline.

Want to build an AI platform?

We design and ship production-grade ML infrastructure. Book a free 20-minute call and let's scope your platform.