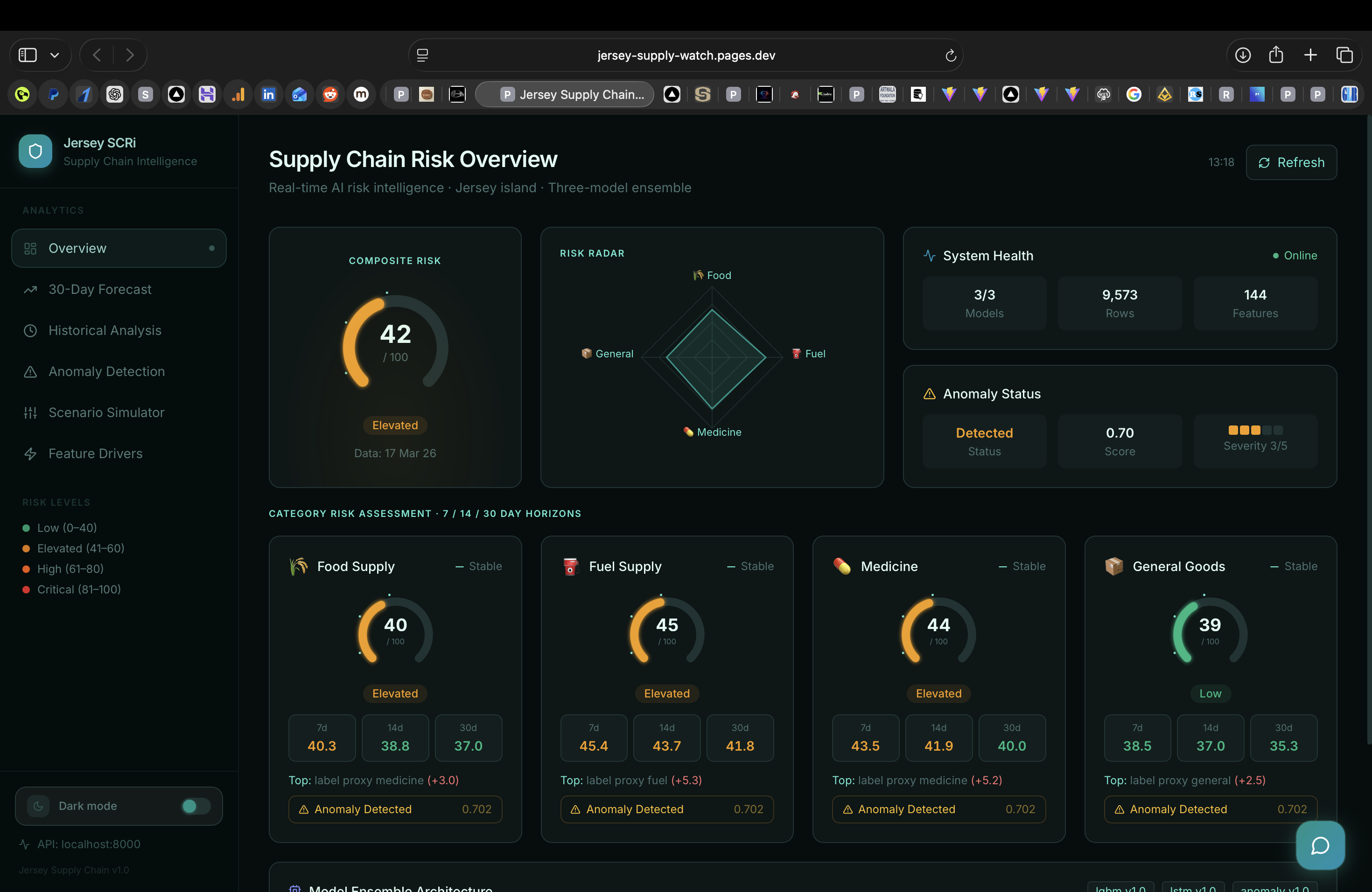

Jersey Supply Chain AI

Real-Time AI Risk Intelligence for a Nation's Supply Chain

View Live Project

1 / 5

1 / 53-model ensemble

Model Architecture

9,573

Training Rows

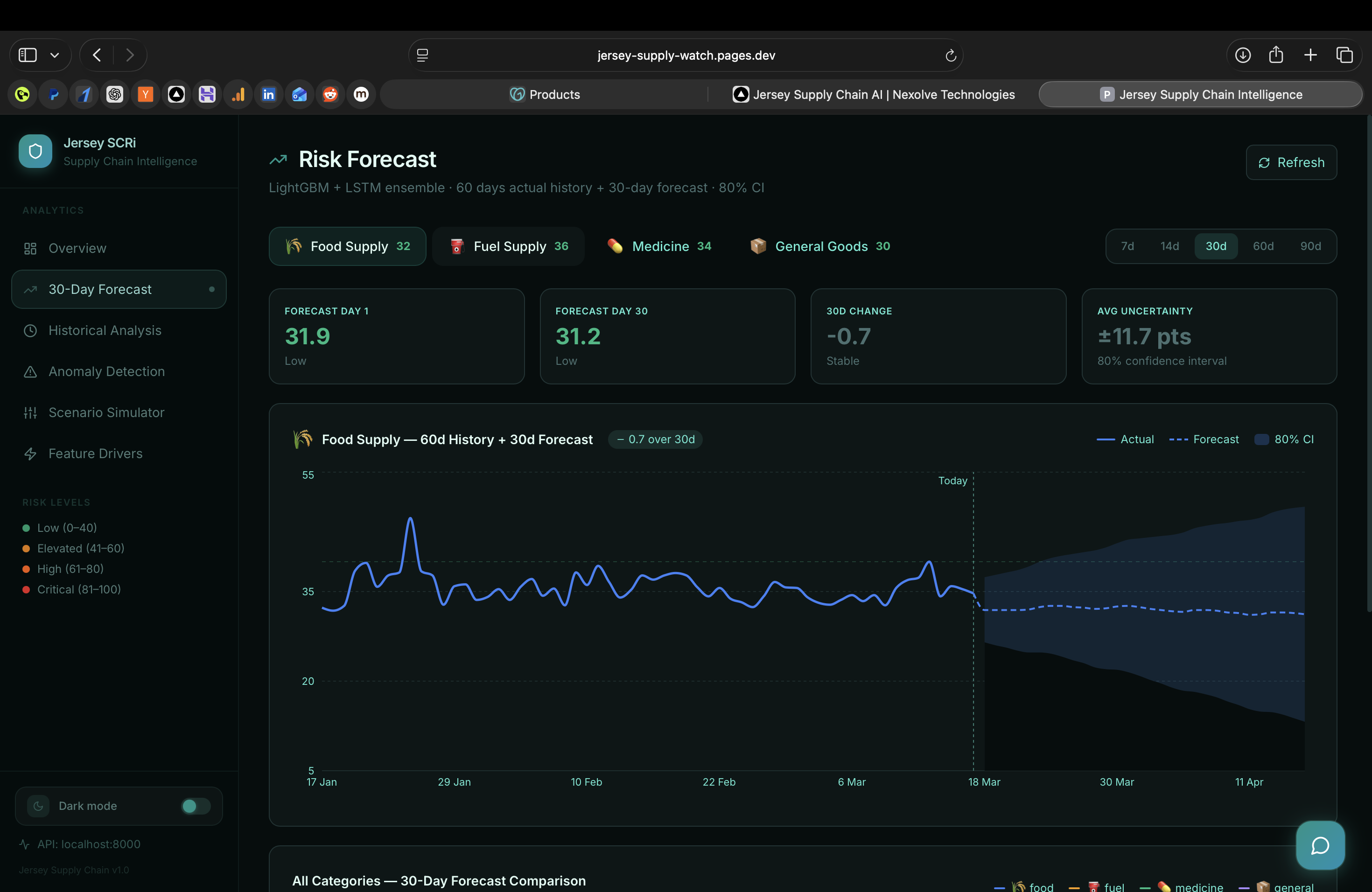

30 days

Forecast Horizon

About the Project

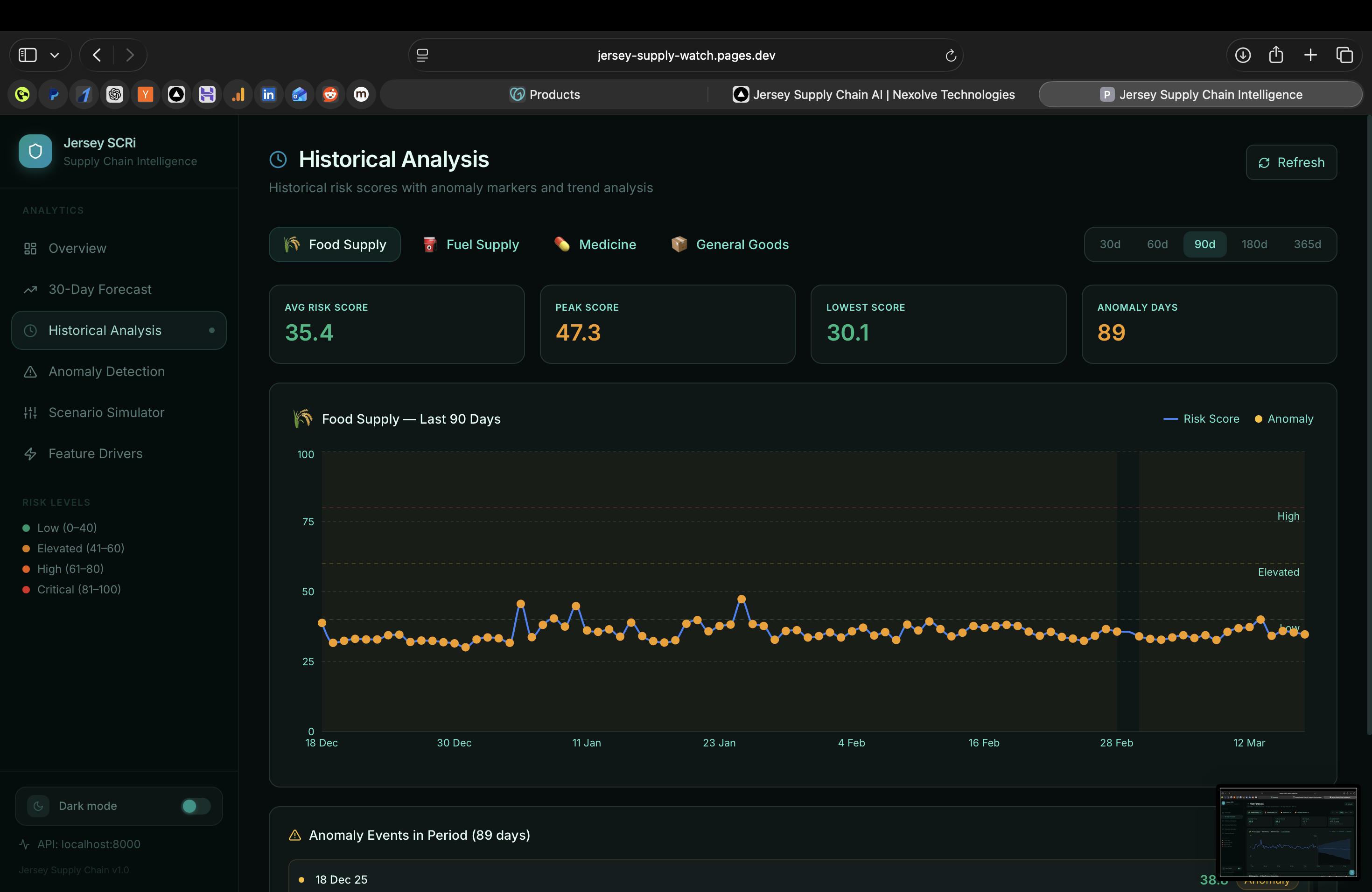

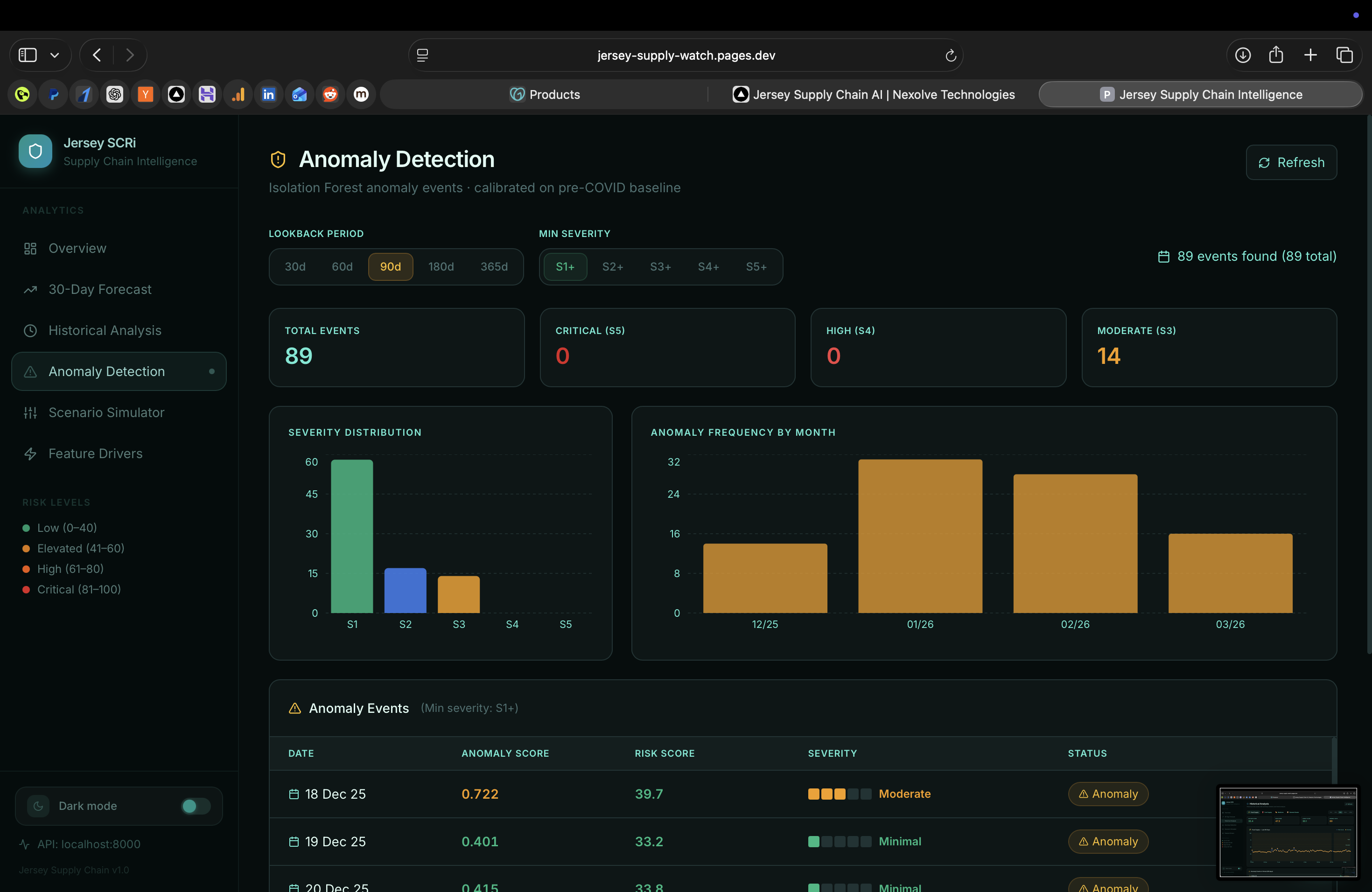

Jersey Supply Chain AI (Jersey SCRi) is a decision-support dashboard built for Jersey Island's supply chain governance, a small island economy with acute exposure to food, fuel, medicine, and general goods import disruption. The Next.js TypeScript frontend is styled with Shadcn UI and Tailwind CSS, served via Cloudflare; a FastAPI Python backend exposes the inference API backed by PKL-serialised trained models hosted on HuggingFace. A three-model ensemble (LSTM for temporal patterns, XGBoost for feature importance, anomaly isolation forest for outlier detection) trained on 9,573 rows of supply chain history surfaces a live composite risk score with 7-, 14-, and 30-day forecast horizons.

How It Works

- 1

The Next.js TypeScript frontend queries the FastAPI inference endpoint for live risk scores and forecast data; Cloudflare caches non-real-time responses at the edge and provides DDoS protection for the public-facing dashboard.

- 2

The FastAPI backend loads PKL-serialised LSTM, XGBoost, and isolation forest models from HuggingFace model repositories on startup, serving low-latency inference via a REST API without per-request model loading overhead.

- 3

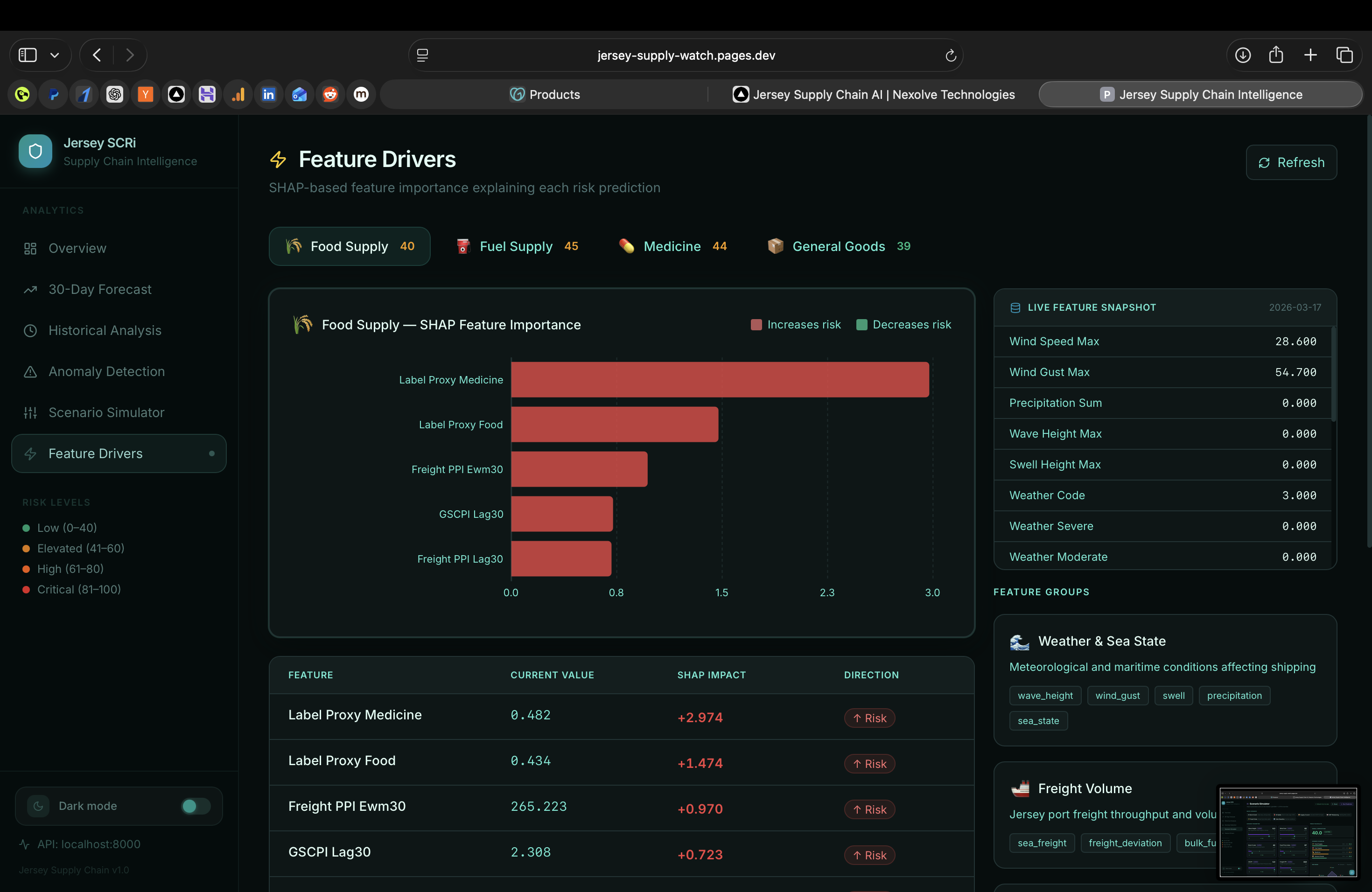

The LSTM sequence model captures temporal dependencies and seasonal cycles in import volumes; XGBoost provides interpretable feature importance rankings per supply category (Food, Fuel, Medicine, General Goods).

- 4

The isolation forest anomaly network flags statistical outliers, triggering an Anomaly Detected badge with a severity score (1–5) when readings deviate significantly from the rolling historical baseline.

- 5

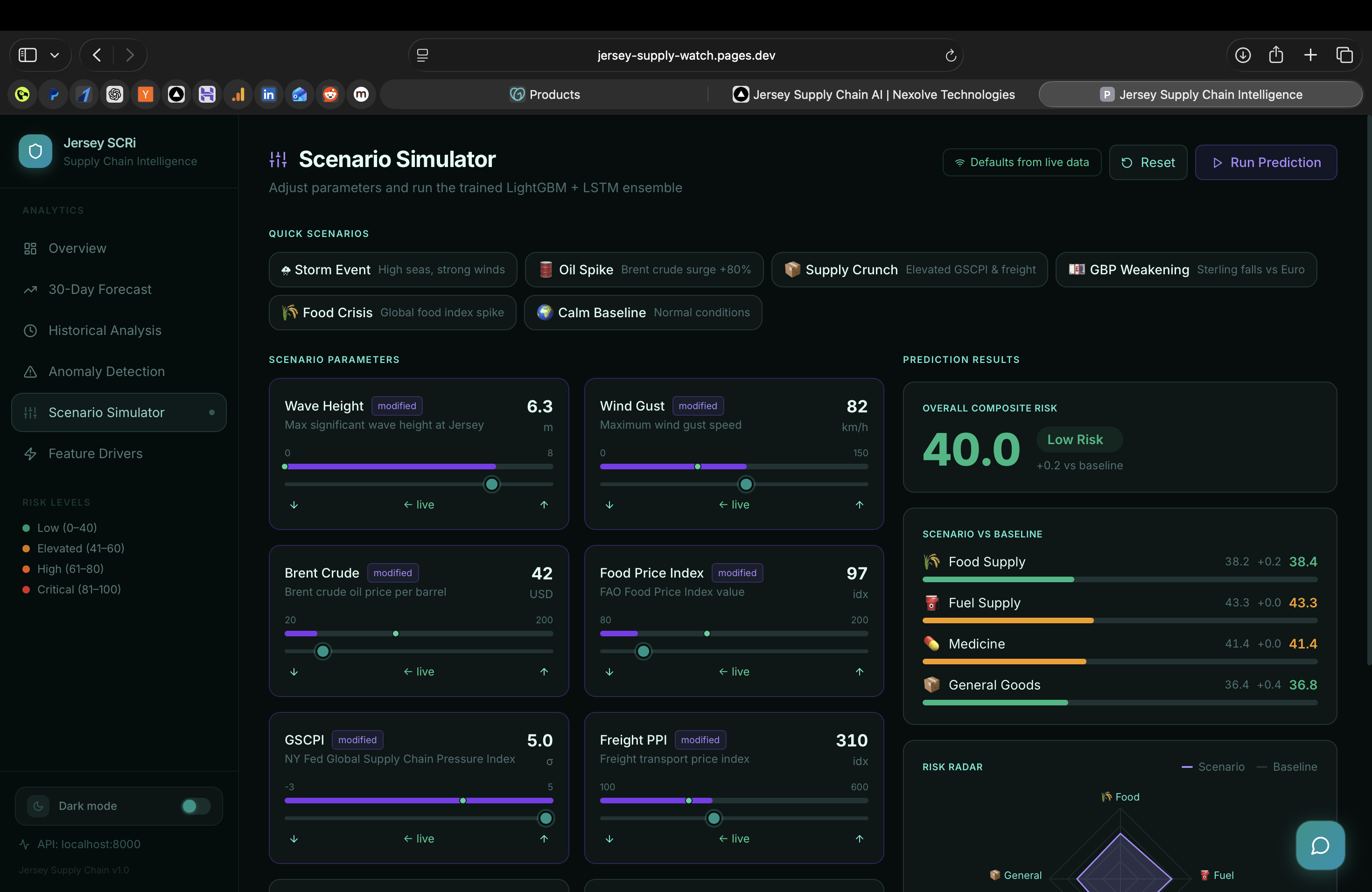

The Scenario Simulator lets analysts stress-test hypotheticals (e.g., 'what if fuel imports drop 30% for 2 weeks?') by injecting perturbations into the FastAPI model endpoints and re-running the 30-day forecast in real time.

Tech Stack

Want to build something like this?

We'd love to hear about your project. Let's talk about what you're building.